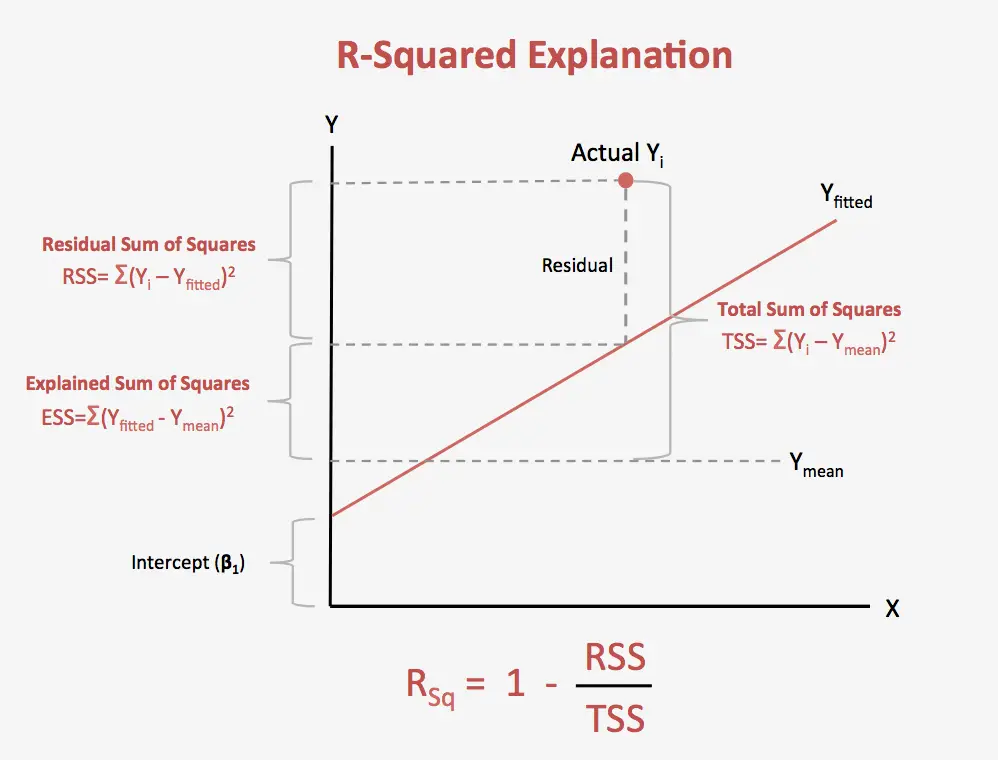

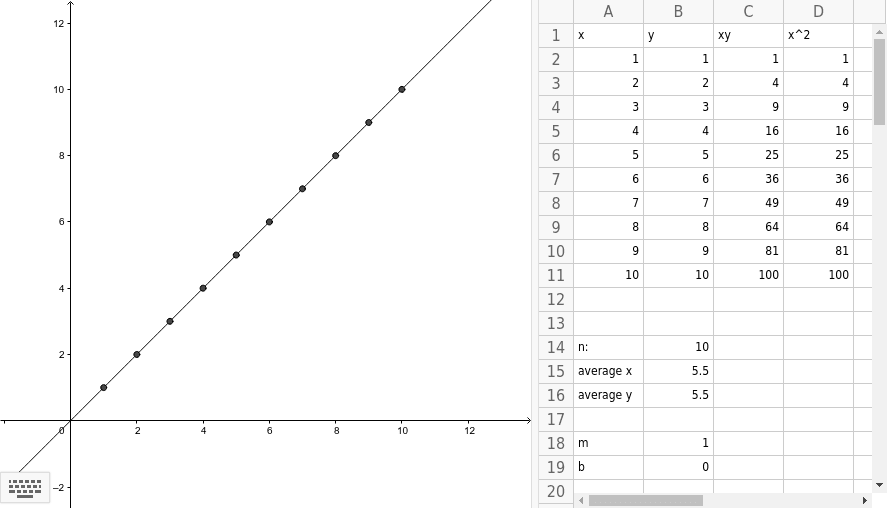

For example, if you wanted to generate a line of best fit for the association between height and shoe size, allowing you to predict shoe size on the basis of a person's height, then height would be your independent variable and shoe size your dependent variable). the variable Y can be estimated for any value of variable X using the line equation and estimators of beta1 and beta0: hat y0 hat beta1. To begin, you need to add paired data into the two text boxes immediately below (either one value per line or as a comma delimited list), with your independent variable in the X Values box and your dependent variable in the Y Values box. Regression line, total sum of Squares (TSS or SST), explained sum of squares (ESS), residual sum of squares (RSS) and others etc. This calculator will determine the values of b and a for a set of data comprising two variables, and estimate the value of Y for any specified value of X.

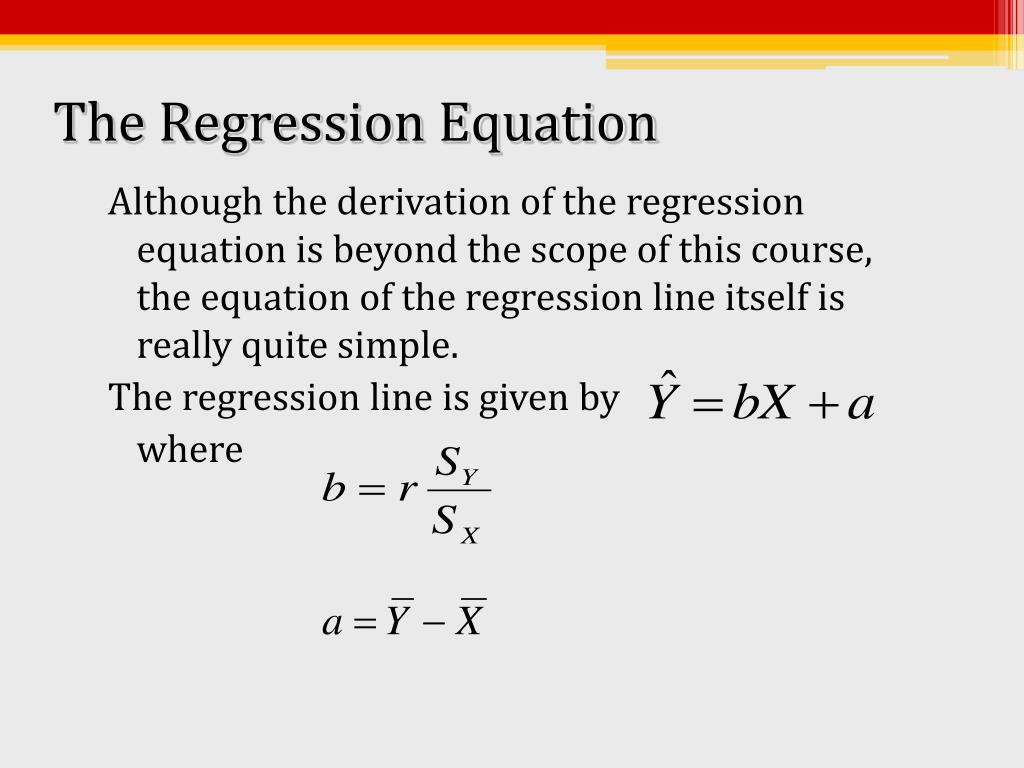

The line of best fit is described by the equation ŷ = bX + a, where b is the slope of the line and a is the intercept (i.e., the value of Y when X = 0). Bias is the tendency of a model to consistently predict the same value, regardless of the true value of the dependent variable.This simple linear regression calculator uses the least squares method to find the line of best fit for a set of paired data, allowing you to estimate the value of a dependent variable ( Y) from a given independent variable ( X). The choice of degree for polynomial regression is a trade-off between bias and variance. Choosing a Degree for Polynomial Regression Polynomial regression fits a nonlinear relationship between the value of x and the corresponding conditional mean of y, denoted E(y | x). Polynomial Regression is a form of linear regression in which the relationship between the independent variable x and dependent variable y is modeled as an nth-degree polynomial. Where y’ is the estimated target output, y is the corresponding (correct) target output, and Var is Variance, the square of the standard deviation. The best possible score is 1.0, lower values are worse. We define:Įxplained_variance_score = 1 – Var In the above example, we determine the accuracy score using Explained Variance Score. Residual Error Plot for the Multiple Linear Regression Let us consider a dataset where we have a value of response y for every feature x: Hence, we try to find a linear function that predicts the response value(y) as accurately as possible as a function of the feature or independent variable(x). dependent and independent variables are linearly related. In linear regression, we assume that the two variables i.e. It is one of the most basic machine learning models that a machine learning enthusiast gets to know about. Simple linear regression is an approach for predicting a response using a single feature.

Multiple linear regression: This involves predicting a dependent variable based on multiple independent variables.Simple linear regression: This involves predicting a dependent variable based on a single independent variable.There are two main types of linear regression: As shown below, figure 1 has homoscedasticity while Figure 2 has heteroscedasticity.Īs we reach the end of this article, we discuss some applications of linear regression below. Homoscedasticity : Homoscedasticity describes a situation in which the error term (that is, the “noise” or random disturbance in the relationship between the independent variables and the dependent variable) is the same across all values of the independent variables.Outliers can affect the results of the analysis. Outliers are data points that are far away from the rest of the data. No outliers: We assume that there are no outliers in the data.You can refer here for more insight into this topic. Autocorrelation occurs when the residual errors are not independent of each other. Little or no autocorrelation : Another assumption is that there is little or no autocorrelation in the data.Multicollinearity occurs when the features (or independent variables) are not independent of each other. Little or no multi-collinearity : It is assumed that there is little or no multicollinearity in the data.Software Engineering Interview Questions.Top 10 System Design Interview Questions and Answers.Top 20 Puzzles Commonly Asked During SDE Interviews.Commonly Asked Data Structure Interview Questions.Top 10 algorithms in Interview Questions.Top 20 Dynamic Programming Interview Questions.Top 20 Hashing Technique based Interview Questions.Top 50 Dynamic Programming (DP) Problems.Top 20 Greedy Algorithms Interview Questions.Top 100 DSA Interview Questions Topic-wise.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed